Software-Defined Memory: Defined

posted on December 13, 2015 by Amit Golander

A year ago the Fusion-IO folks in SanDisk made the first attempt to define software-defined memory (SDM, here). but a lot has changed since then. 2015 was a crucial year for the standardization of non-volatile DIMM (NVDIMM) components. There have also been several announcements of new storage-class memory devices like Intel & Micron 3D-Xpoint, HP & SanDisk RRAM and Sony & Viking ReRAM.

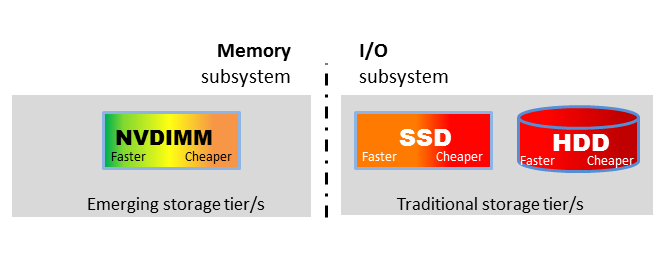

SDM should have been called SDSM, because it is not only about the memory, but about the convergence of thus far two separate domains: Storage and Memory. Storage is a multi-layer software implementation outside of the realm of Computer Architecture (i.e. I/O subsystem). The memory subsystem is the exact opposite, it is an integral part of Computer Architecture and implemented by hardware engineers. I know that from first hand experienced, because I worked on a memory subsystem in 2008 and did what everyone considered as a “career move” (including me at the time) to storage. With retrospect, I can say that they are both sides of the same thing – what we’ll continue to call SDM.

Figure 1 illustrates the growing range of storage devices. Devices are sorted by latency from the fastest on the left to the cheapest on the right. We also recognize that each commodity device has several implementations, some optimized for speed and others for price. For the well-known SSD box those would be the high-performance NVMe devices (orange) and the low cost TLC NAND based SATA drives (red).

NVDIMM form factor devices are physically attached to the memory subsystem. Moving the data closer to the CPU is the first step in gaining lower latency and higher throughput. However, the performance gain will be limited without the right hardware and software.

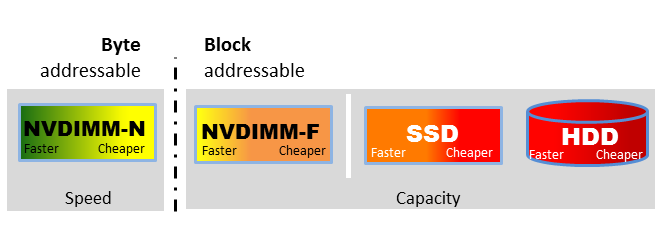

Figure 2 shows that there are two main NVDIMM types. NVDIMM-F is basically a variation of an SSD device that “happens” to reside on the memory interconnect.

NVDIMM-N however is a game changer. NVDIMM-N devices are fully mapped to the memory space and can be used without the traditional block layer. The decades-old software block architecture simply hides most of the hardware benefits.

New software is essential to delivering the value of NVDIMM-N devices. That new software is SDM and it has the potential to unify traditional and in-memory compute middleware so they can share data in a standard way.

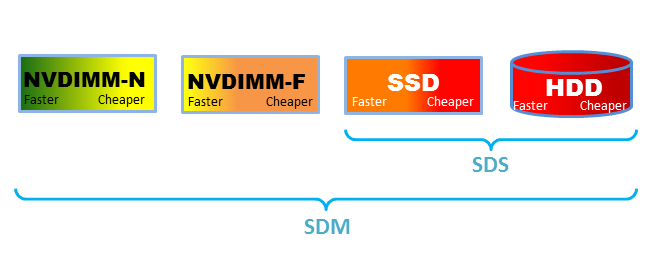

In its narrow definition, SDM is a software solution that can utilize standard heterogeneous off-the-shelf persistent memory devices and present them using standard APIs in a way that hides the internal complexity. Plexistor’s SDM implementation goes a step further and leverages different types of devices in order to allow a decent price alongside the ground breaking performance levels.

Software defined memory is a game changing architecture. Converging Memory and Storage can guaranty data safety of In-memory applications and enable them to run large working sets at lower costs. SDM also enables traditional storage-based applications to achieve near-memory performance levels.

That is the true value of SDM.

Get Started Now

Get Started Now