Wasting Money is Easier than Ever

posted on March 2, 2016 by Amit Golander

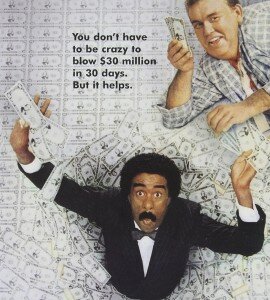

Once upon a time (1985), Richard Pryor had to work hard for a full month in order to waste 30 million dollars. And he almost failed…

30 years later, with a modern infrastructure – it is barely a challenge. NoSQL middleware will easily shard to additional servers and public cloud vendors will happily rent you as many servers as you want.

Unlike Richard Pryor, you’re not trying to waste money. It just happens.

The company is doing well and more data is being collected from an expanding customer base, so the working set continues to grow. Wanting to maintain the required performance level you consult NoSQL best practices documents, which instruct you to shard until the active working set fits in memory. You do as instructed and infrastructure cost goes up.

It does not have to be that way.

This happens because traditional software that you don’t even think about is only able to extract a fraction of the potential that the hardware is capable of. A smarter software stack that converges memory and storage can extract so much more.

This claim was exemplified with Cassandra on Amazon. The white paper, available here, compares the cluster sizes required for supporting a large working set size with certain throughput and latency characteristics. It shows that the Cassandra cluster running on top of a Software Defined Memory solution (SDM) is 2.5x to 3x smaller (and thus cheaper) compared to the one running on traditional storage software.

With SDM the NoSQL Admin can control the cost. SDM can be transparently applied to servers hosting In-memory compute (IMC) workloads, resulting in enhanced performance, improved efficiency, and reduced cost.

You’re welcome to try it out at: plexistor.com/download/

Get Started Now

Get Started Now